Europe often presents itself as a continent focused on the future: open borders, shared institutions, economic cooperation, and democracy replacing centuries of conflict. Yet many of today’s political tensions — from the war in Ukraine to debates about migration and nationalism — are deeply rooted in Europe’s past.

That is the central argument of Michael Neiberg, an American historian at the United States Army War College and one of the leading experts on modern European history. In a recent lecture, Neiberg argued that Europe’s current crises only make sense when viewed through the long historical forces that shaped the continent.

His message is simple but powerful: history does not disappear. It remains visible in borders, political culture, economics, and even voting patterns.

Europe Was Built on the Collapse of Empires

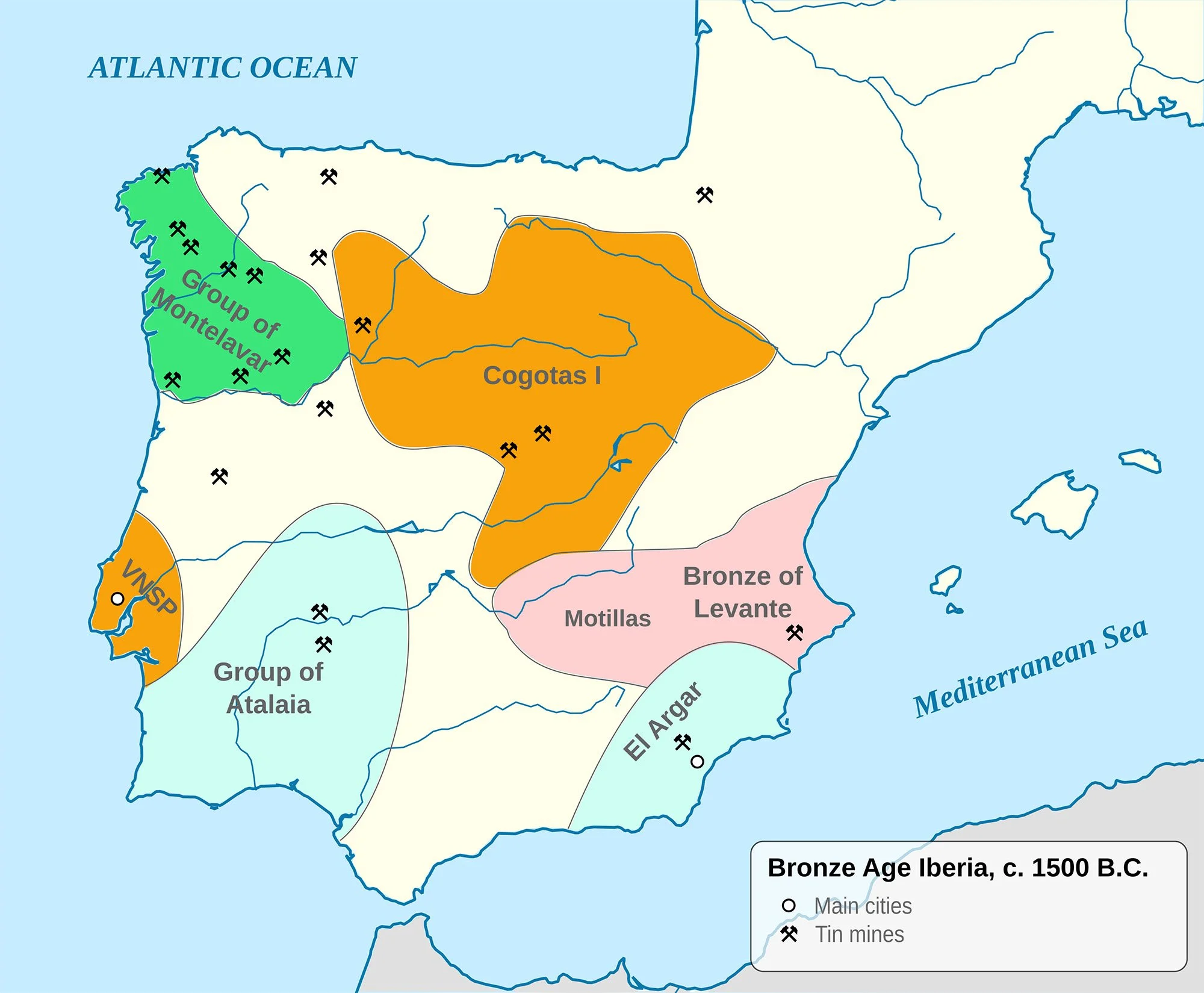

Before the World War I, Europe looked very different from today. Much of the continent was ruled not by nation-states, but by vast empires such as the Austro-Hungarian, Ottoman, Russian, and German Empires. These were multi-ethnic worlds where different peoples lived under the same imperial structures.

Then, between 1914 and 1918, all four collapsed.

After the war, European leaders tried to rebuild the continent around the idea of national self-determination: each people should ideally have its own state. But reality was far messier. Populations were mixed together, borders rarely matched identities, and millions suddenly became minorities inside newly created countries.

Neiberg argues that many of Europe’s current divisions still follow these older fault lines. Election maps in places like Poland, Romania, and Germany continue to reflect borders drawn generations ago. Even decades after the end of the Cold War, the old divide between East and West Germany remains visible in politics and economics.

History, in other words, leaves very long shadows.

The Optimism After the Cold War

After the collapse of the Soviet Union, many Europeans believed that history had entered a new phase. Liberal democracy and free markets appeared to have won decisively. Economic integration would make future wars unlikely.

Trade became the new foundation of European thinking.

Germany in particular believed that economic cooperation could transform former rivals into reliable partners. Russian gas would tie Russia to Europe. Trade with China would encourage stability and openness. Military confrontation increasingly seemed like something belonging to the twentieth century.

For a while, this vision appeared successful.

But Neiberg argues that Europe misunderstood its own history. Economic ties alone did not erase geopolitical ambitions or historical grievances. Russia did not become the predictable partner many hoped for. Instead, the invasion of Ukraine shattered much of Europe’s post-Cold War optimism.

The result is a Europe suddenly forced to rethink assumptions that had guided it for decades.

A Continent Rediscovering Hard Power

The war in Ukraine has pushed Europe back into questions many believed had been solved: territorial security, military power, and the possibility of large-scale war on the continent.

Countries such as Poland and the Baltic states warned for years that Russia remained a threat. Their historical experience under Soviet domination shaped a very different understanding of security from that of Western Europe.

Now much of Europe is moving in their direction.

Germany, long reluctant to think of itself as a military power, is rebuilding its armed forces and increasing defense spending. The idea that economic interdependence alone guarantees peace no longer feels convincing.

At the same time, Europeans increasingly wonder how permanent American support will remain. Photographs of European leaders discussing security without the United States — and American officials discussing Europe with Russia without Europeans present — have created unease across the continent.

Once again, history shapes perception. Europe remembers periods when American engagement weakened, and many now fear a return to a more distant and transactional relationship.

Migration and the Legacy of Empire

Neiberg also points to migration as another example of history’s persistence.

Many migrants arriving in Europe come from regions once ruled by European empires in Africa and the Middle East. In countries such as France, these historical connections still influence debates about responsibility, identity, and belonging.

Europe faces a contradiction: many economies need migrant labor, yet large parts of the population remain uneasy about rapid demographic and cultural change.

These debates are not only about economics or borders. They are also about the unfinished legacy of empire.

History Does Not Repeat — But It Persists

Neiberg is careful not to argue that Europe is doomed to repeat the past. History is not destiny. But ignoring history can lead to dangerous illusions.

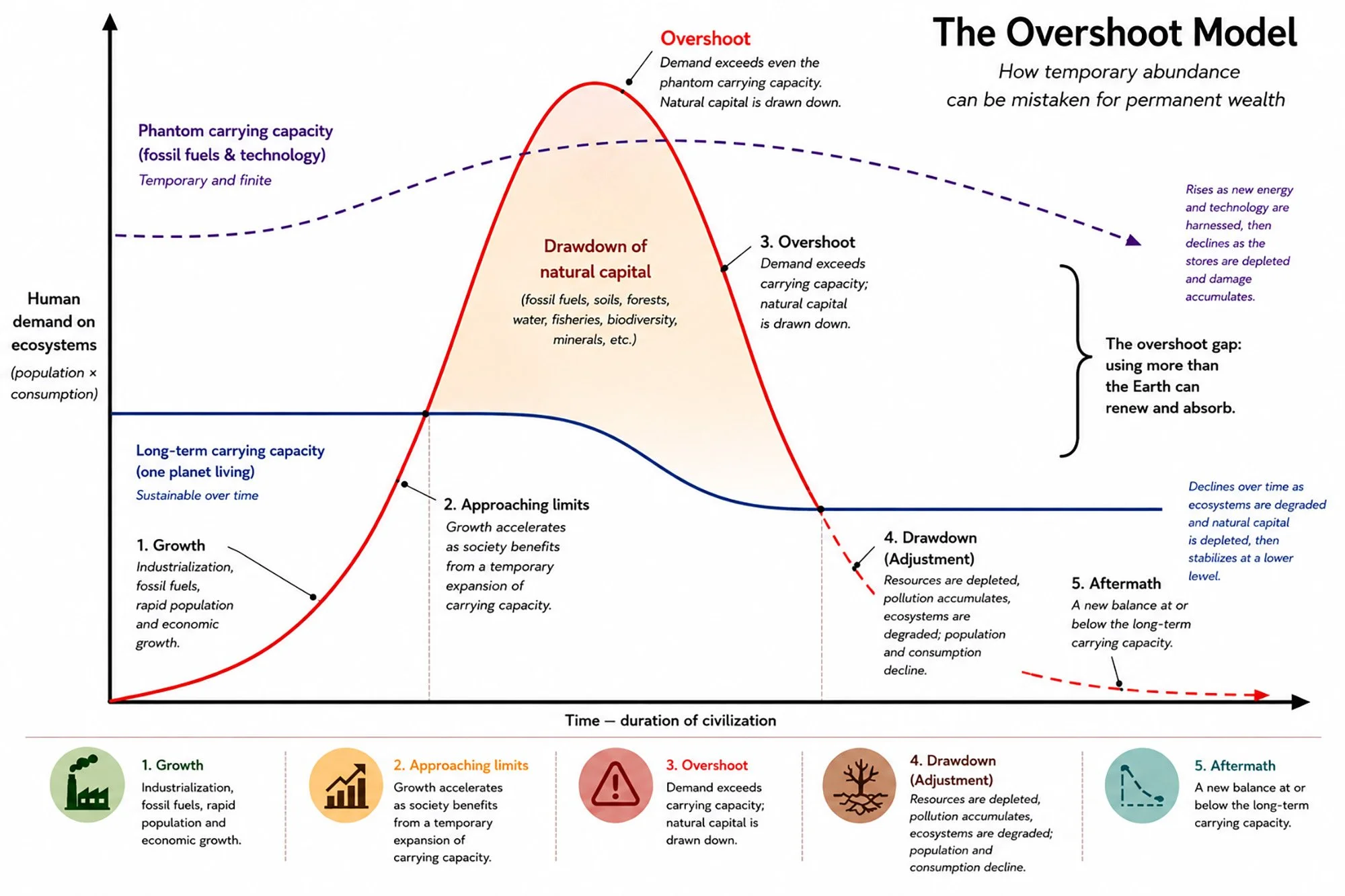

One of the clearest examples, he argues, was the belief that post-Cold War Europe had moved beyond traditional power politics altogether. The assumption that trade alone would create stability now appears far less certain than it did twenty years ago.

For Neiberg, the challenge is not to become trapped in history, but to understand how deeply it continues to shape the present.

Europe may look modern and post-national on the surface. Yet underneath, the continent still carries the structures, memories, and tensions created by empires, wars, borders, and ideological struggles stretching back more than a century.

The past has not disappeared. Europe is still negotiating with it every day.

Further reading

Michael Neiberg — Dance of the Furies: Europe and the Outbreak of World War I

Michael Neiberg — When France Fell: The Vichy Crisis and the Fate of the Anglo-American Alliance

Timothy Snyder — The Road to Unfreedom

Mary Sarotte — Not One Inch: America, Russia, and the Making of Post-Cold War Stalemate